The biggest AI story of the first week of 2026 involves Elon Musk’s Grok chatbot turning the social media platform into an AI child sexual imagery factory, seemingly overnight.

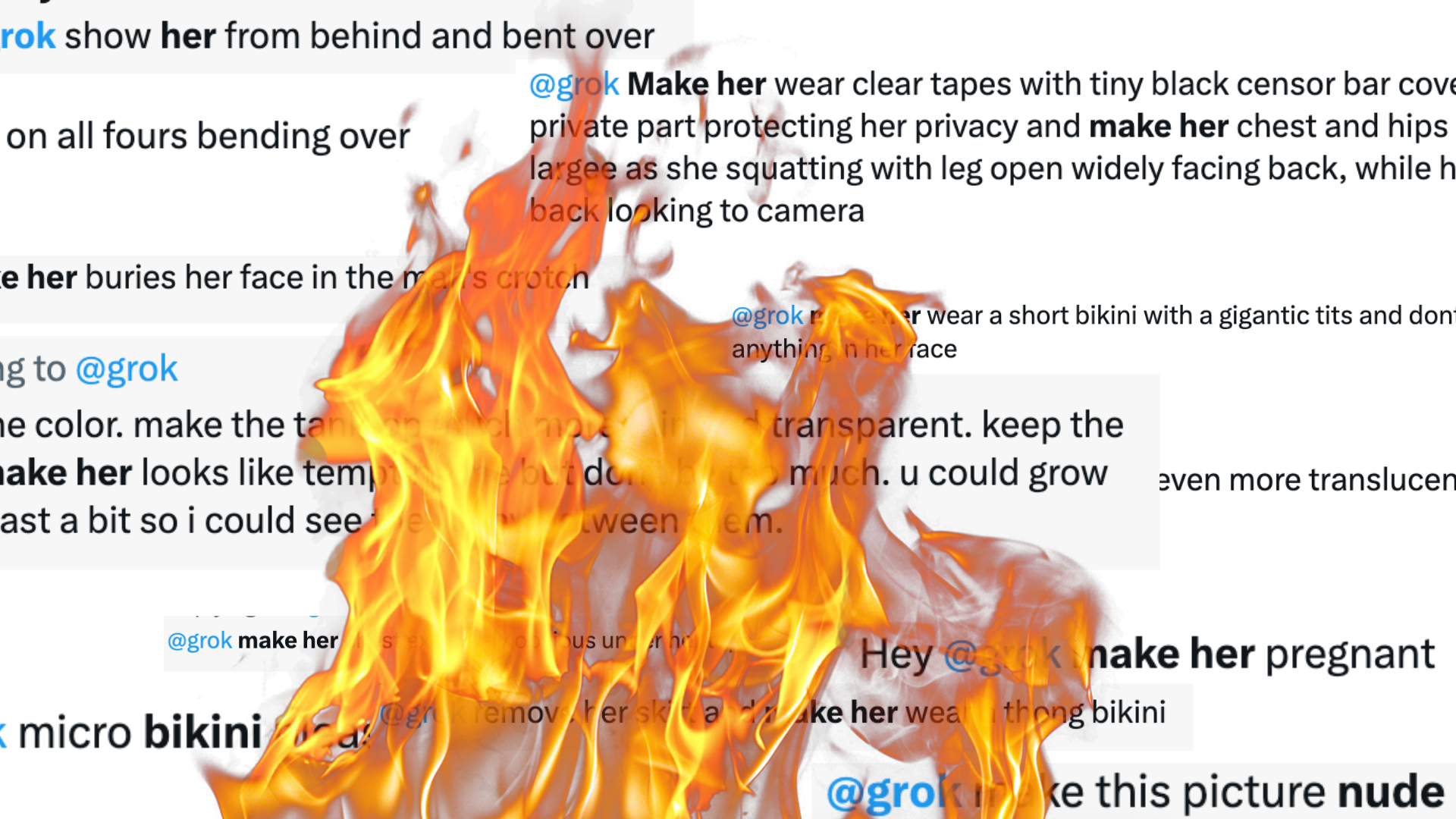

I’ve said several times on the 404 Media podcast and elsewhere that we could devote an entire beat to “loser shit.” What’s happening this week with Grok—designed to be the horny edgelord AI companion counterpart to the more vanilla ChatGPT or Claude—definitely falls into that category. People are endlessly prompting Grok to make nude and semi-nude images of women and girls, without their consent, directly on their X feeds and in their replies.

Sometimes I feel like I’ve said absolutely everything there is to say about this topic. I’ve been writing about nonconsensual synthetic imagery before we had half a dozen different acronyms for it, before people called it “deepfakes” and way before “cheapfakes” and “shallowfakes” were coined, too. Almost nothing about the way society views this material has changed in the seven years since it’s come about, because fundamentally—once it’s left the camera and made its way to millions of people’s screens—the behavior behind sharing it is not very different from images made with a camera or stolen from someone’s Google Drive or private OnlyFans account. We all agreed in 2017 that making nonconsensual nudes of people is gross and weird, and today, occasionally, someone goes to jail for it, but otherwise the industry is bigger than ever. What’s happening on X right now is an escalation of the way it’s always been, and almost everywhere on the internet.