Subscribe

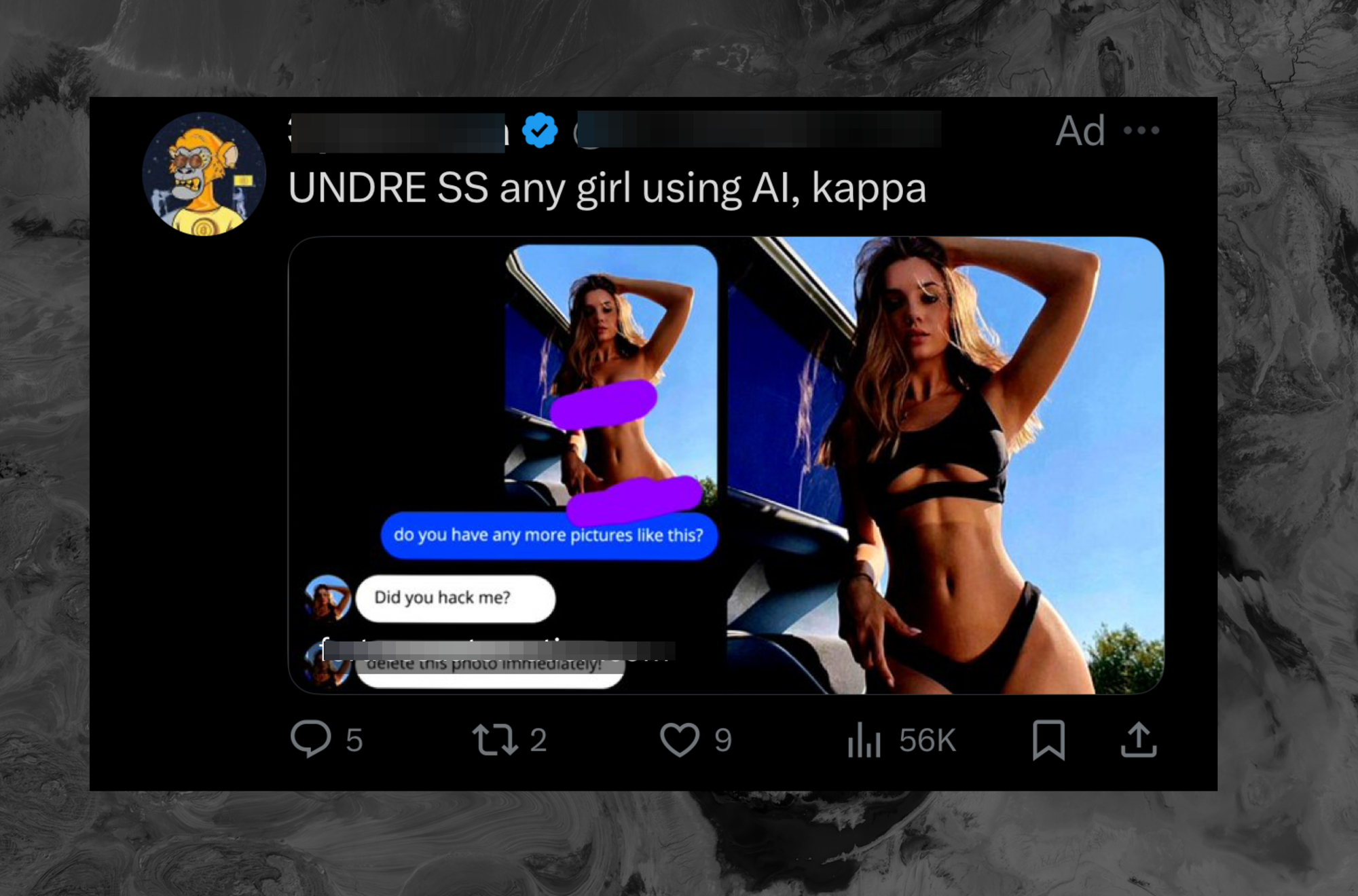

Twitter is showing ads for an abusive app designed to “undress” women using artificial intelligence, in yet another sign of how horrible Twitter has become, and the type of bottom-of-the-barrel ads Elon Musk’s company is now taking on in the wake of a mass advertiser exodus.

The ad, which I got in my timeline the other day and has been screenshotted and gone viral elsewhere on the platform, shows a woman in a bikini in one image. In another, it shows an image of her with the bikini artificially removed, and a staged text conversation between a person and the woman: “do you have any more pictures like this?,” a text bubble shows below the now artificially nude photo. “Did you hack me?” the woman in the ad responds. “Delete this photo immediately!”

“UNDRE SS any girl using AI,” the text caption for the ad says.

The ad highlights both the fact that these nonconsensual tools continue to proliferate. X has seemingly done nothing to prevent people from sharing them and, in this case, is taking money specifically to promote a product designed to sexually harass women.

A report from Motherboard earlier Friday showed that X’s moderation of so-called “nudify” apps is nonexistent, while TikTok and Instagram have made it more difficult to search for terms like “undress.” Bloomberg earlier reported that these types of apps are proliferating all over the internet. The account that bought the ad is still online as of the time of this writing. The existence of this ad shows that Twitter is not only failing to moderate this nonconsensual content but is actively profiting from it.

The app in question uses identical branding to a company called Deepnude AI, which 404 Media’s Sam Cole wrote about in 2019. After her article, the founder of that app deleted it entirely.