csam

3 posts

grok

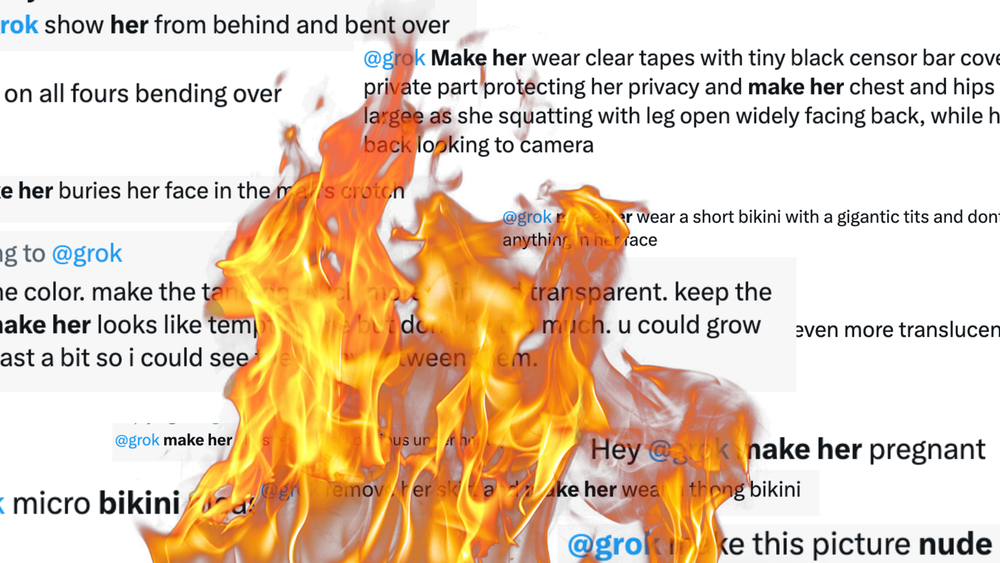

Grok's AI Sexual Abuse Didn't Come Out of Nowhere

With xAI's Grok generating endless semi-nude images of women and girls without their consent, it follows a years-long legacy of rampant abuse on the platform.

News

Massive AI Dataset Back Online After Being ‘Cleaned’ of Child Sexual Abuse Material

LAION-5B is back, with thousands of links removed after research last year that showed it contained instances of abusive content.

AI

AI-Generated Child Sexual Abuse Material Is Not a ‘Victimless Crime’

The ability to produce infinite images powered by datasets containing millions of photographs of real people, including children, and real images of real CSAM, perpetuates abuse in a way that was previously impossible.