During a presentation at the International Atomic Energy Agency’s (IAEA) International Symposium on Artificial Intelligence on December 3, a US Department of Energy scientist laid out a grand vision of the future where nuclear energy powers artificial intelligence and artificial intelligence shapes nuclear energy in “a virtuous cycle of peaceful nuclear deployment.”

“The goal is simple: to double the productivity and impact of American science and engineering within a decade,” Rian Bahran, DOE Deputy Assistant Secretary for Nuclear Reactors, said.

His presentation and others during the symposium, held in Vienna, Austria, described a world where nuclear powered AI designs, builds, and even runs the nuclear power plants they’ll need to sustain them. But experts find these claims, made by one of the top nuclear scientists working for the Trump administration, to be concerning and potentially dangerous.

Tech companies are using artificial intelligence to speed up the construction of new nuclear power plants in the United States. But few know the lengths to which the Trump administration is paving the way and the part it's playing in deregulating a highly regulated industry to ensure that AI data centers have the energy they need to shape the future of America and the world.

At the IAEA, scientists, nuclear energy experts, and lobbyists discussed what that future might look like. To say the nuclear people are bullish on AI is an understatement. “I call this not just a partnership but a structural alliance. Atoms for algorithms. Artificial intelligence is not just powered by nuclear energy. It’s also improving it because this is a two way street,” IAEA Director General Rafael Mariano Grossi said in his opening remarks.

In his talk, Bahran explained that the DOE has partnered with private industry to invest $1 trillion to “build what will be an integrated platform that connects the world’s best supercomputers, AI systems, quantum systems, advanced scientific instruments, the singular scientific data sets at the National Laboratories—including the expertise of 40,000 scientists and engineers—in one platform.”

Big tech has had an unprecedented run of cultural, economic, and technological dominance, expanding into a bubble that seems to be close to bursting. For more than 20 years new billion dollar companies appeared seemingly overnight and offered people new and exciting ways of communicating. Now Google search is broken, AI is melting human knowledge, and people have stopped buying a new smart phone every year. To keep the number going up and ensure its cultural dominance, tech (and the US government) are betting big on AI.

The problem is that AI requires massive datacenters to run and those datacenters need an incredible amount of energy. To solve the problem, the US is rushing to build out new nuclear reactors. Building a new power plant safely is a mutli-year long process that requires an incredible level of human oversight. It’s also expensive. Not every new nuclear reactor project gets finished and they often run over budget and drag on for years.

But AI needs power now, not tomorrow and certainly not a decade from now.

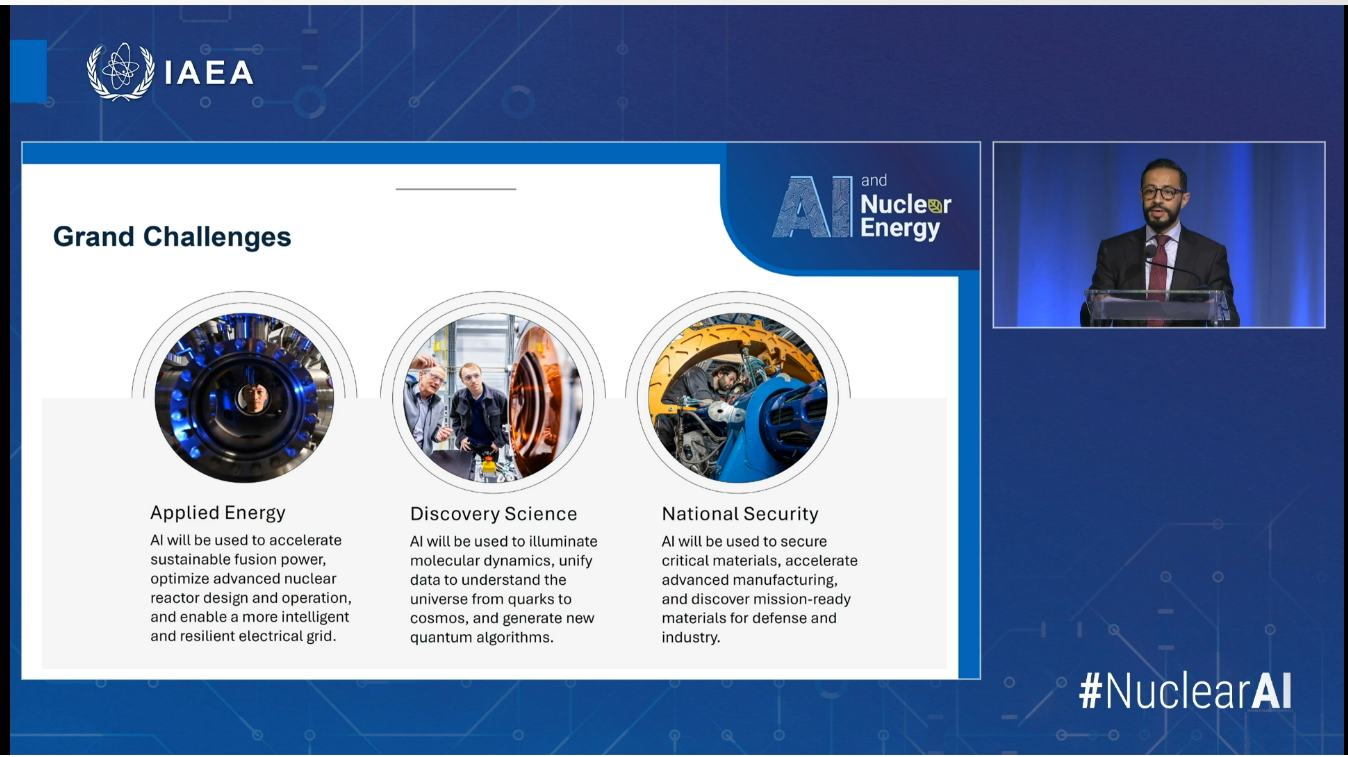

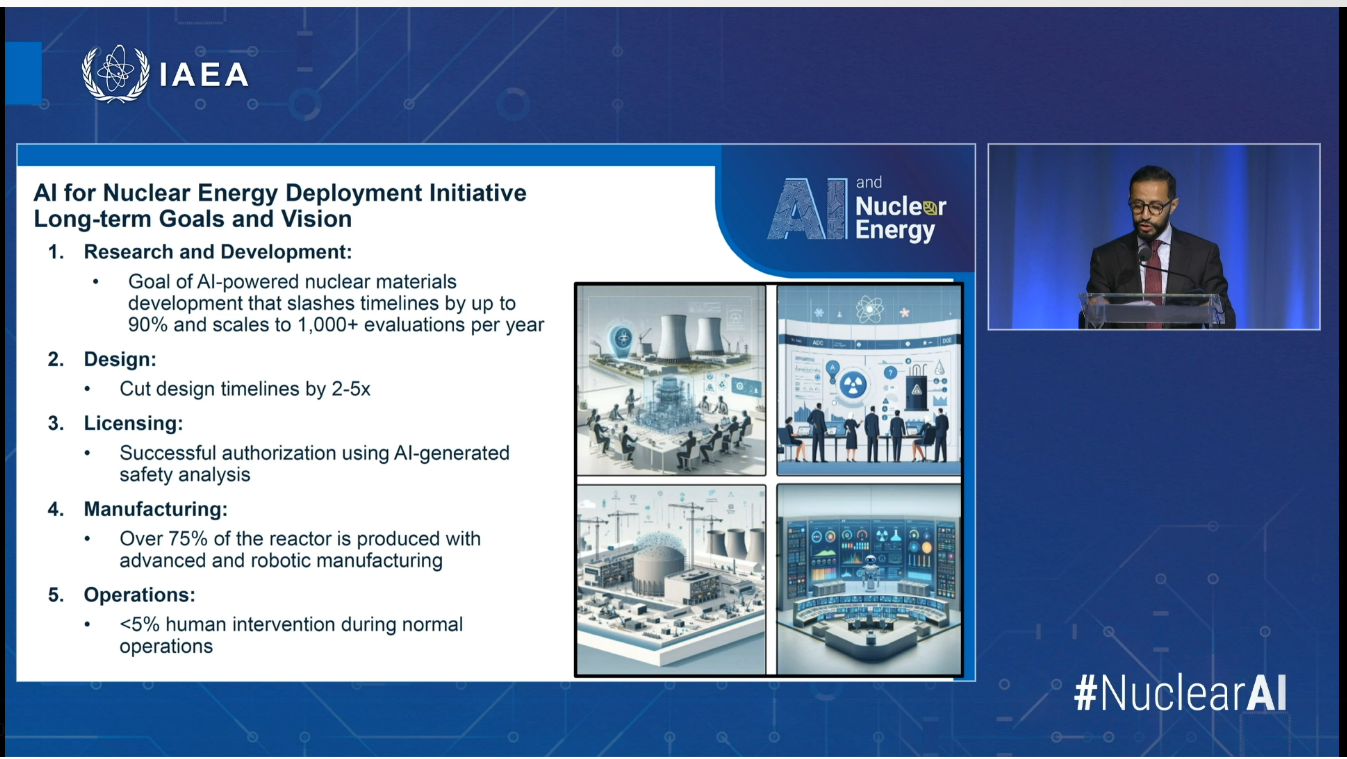

According to Bahran, the problem of AI advancement outpacing the availability of datacenters is an opportunity to deploy new and exciting tech. “We see a future of and near future, by the way, an AI driven laboratory pipeline for materials modeling, discovery, characterization, evaluation, qualification and rapid iteration,” he said in his talk, explaining how AI would help design new nuclear reactors. “These efforts will substantially reduce the time and cost required to qualify advanced materials for next generation reactor systems. This is an autonomous research paradigm that integrates five decades of global irradiation data with generative AI robotics and high throughput experimentation methodologies.”

“For design, we’re developing advanced software systems capable of accelerating nuclear reactor deployments by enabling AI to explore the comprehensive design spaces, generate 3D models, [and] conduct rigorous failure mode analyzes with minimal human intervention,” he added. “But of course, with humans in the loop. These AI powered design tools are projected to reduce design timelines by multiple factors, and the goal is to connect AI agents to tools to expedite autonomous design.”

Bahran also said that AI would speed up the nuclear licensing process, a complex regulatory process that helps build nuclear power plants safely. “Ultimately, the objective is, how do we accelerate that licensing pathway?” he said. “Think of a future where there is a gold standard, AI trained capacity building safety agent.”

He even said that he thinks AI would help run these new nuclear plants. “We're developing software systems employing AI driven digital twins to interpret complex operational data in real time, detect subtle operational deviations at early stages and recommend preemptive actions to enhance safety margins,” he said.

One of the slides Bahran showed during the presentation attempted to quantify the amount of human involvement these new AI-controlled power plants would have. He estimated less than five percent “human intervention during normal operations.”

“The claims being made on these slides are quite concerning, and demonstrate an even more ambitious (and dangerous) use of AI than previously advertised, including the elimination of human intervention. It also cements that it is the DOE's strategy to use generative AI for nuclear purposes and licensing, rather than isolated incidents by private entities,” Heidy Khlaaf, head AI scientist at the AI Now Institute, told 404 Media.

“The implications of AI-generated safety analysis and licensing in combination with aspirations of <5% of human intervention during normal operations, demonstrates a concerted effort to move away from humans in the loop,” she said. “This is unheard of when considering frameworks and implementation of AI within other safety-critical systems, that typically emphasize meaningful human control.”

Sofia Guerra, a career nuclear safety expert who has worked with the IAEA and US Nuclear Regulatory Commission, attended the presentation live in Vienna. “I’m worried about potential serious accidents, which could be caused by small mistakes made by AI systems that cascade,” she said. “Or humans losing the know-how and safety culture to act as required.”