Mayo Clinic, the massive U.S. hospital network, is using what it describes as “Ambient Listening” to record patient interactions with nurses, including in emergency rooms, then using AI to process that collected data. The recording is opt-out, rather than opt-in, and at least some patients are likely not aware the recording is happening.

The recording brings up questions of informed consent and whether the generated notes may be accurate enough. A study last month found that AI-powered scribe tools sometimes produce much less accurate notes than humans depending on the situation.

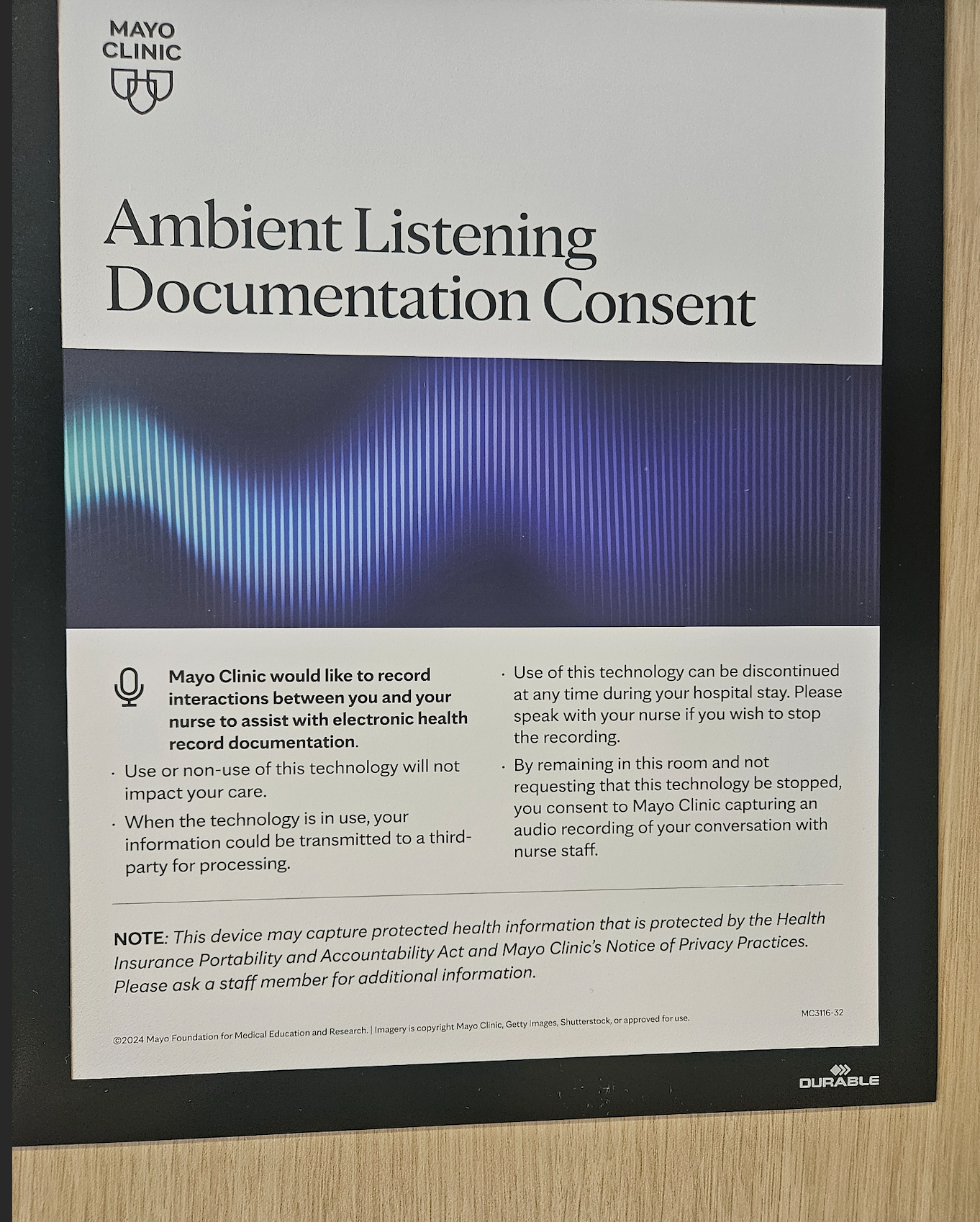

“Mayo Clinic would like to record interactions between you and your nurse to assist with electronic health record documentation,” a notice posted in a Mayo Clinic location reads. A person who said they were taking their elderly father to the emergency room shared a photo of the notice with 404 Media.

“About halfway through his visit in the [emergency department], I had to stretch my legs and just happened to notice this poster, which was not close to the bed or visitor chairs, and was the size of a regular piece of printer paper. My dad certainly didn't notice or read it,” the person told 404 Media. 404 Media granted them anonymity to talk about their parent’s medical care.

“I did not talk to any staff about it, because we were in the middle of an emergency, which is part of my whole issue with this being an opt-out thing in the emergency department. So many people might not notice this or even be healthy enough to read or notice it,” the person added.

The notice specifically says the recording device might capture data that falls under HIPAA, the U.S.’s health data protection law. “This device may capture protected health information that is protected by the Health Insurance Portability and Accountability Act and Mayo Clinic’s Notice of Privacy Practices. Please ask a staff member for additional information,” it reads.

Mayo Clinic has been using Ambient Listening for a couple years at this point, but not all patients may be aware the recording is ongoing. A July 2024 press release says Mayo Clinic is working with medical technology giant Epic and AI company Abridge on “a generative AI ambient documentation workflow for nurses.”

On its website Abridge pitches itself as an “Enterprise-grade AI for clinical conversations—trusted by the largest healthcare systems. Measurably improving outcomes for clinicians, nurses, and revenue cycle teams at scale.” In December 2024, Johns Hopkins Medicine reached an agreement to deploy the Abridge ambient AI platform across 6,700 clinicians, six hospitals, and 40 patient-care centers, according to a press release from Abridge.

Mayo Clinic finalized an “enterprise-wide agreement” with Abridge last year, according to another press release. That paired the technology with around 2,000 clinicians who serve more than 1 million patients annually, the release says.

Neither Mayo Clinic nor Abridge responded to a request for comment.

A recent study found that human note takers create much better notes than AI-powered scribe tools. In some specific cases, the AI performed especially poorly compared to a human: when there was background noise; when the clinician and patient were wearing masks; and to a lesser extent when the patient had an accent, according to the American Medical Journal.

Doctors around the country are increasingly using AI in various forms, such as dictating notes to be added to a patient’s file. On the consumer side, a recent study found that chatbots can give out wildly different and incorrect medical advice to users.