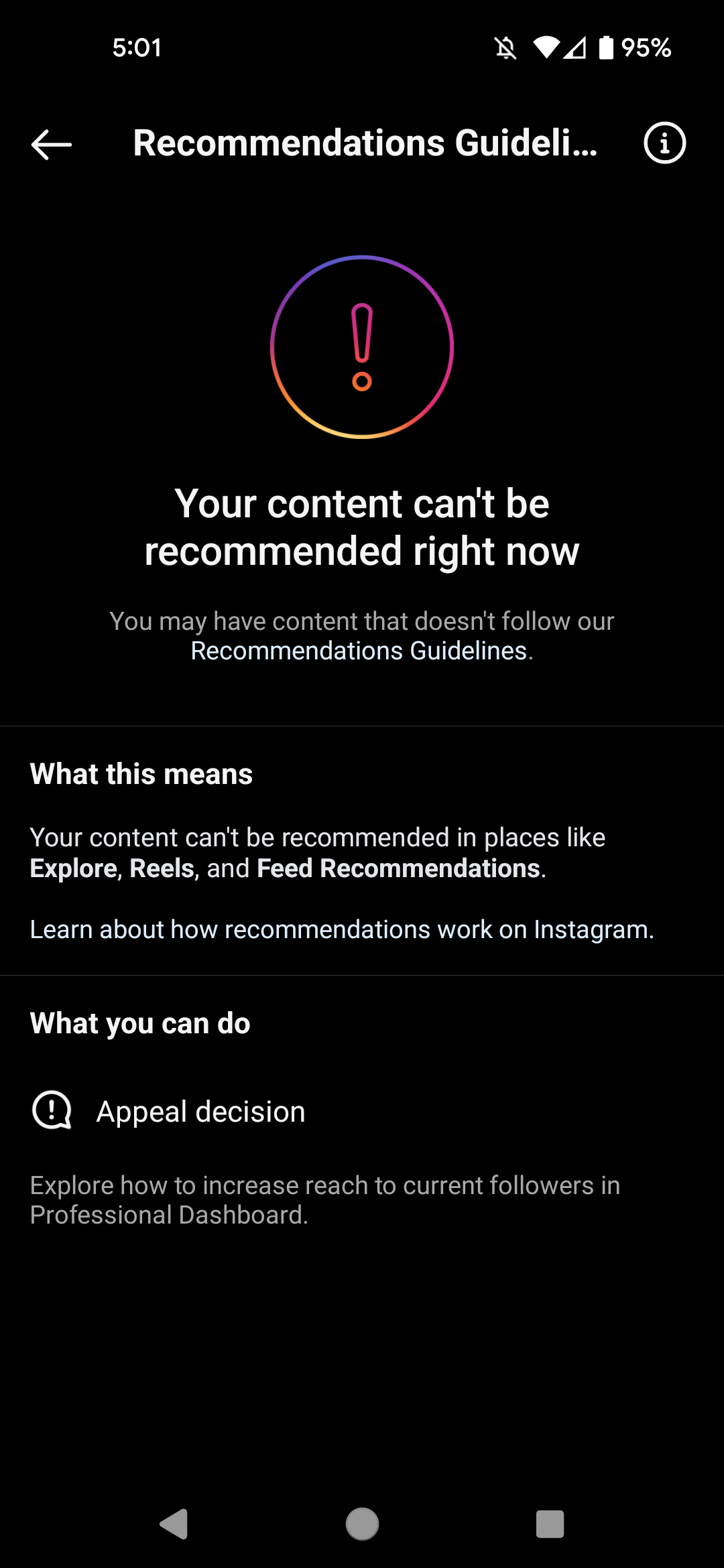

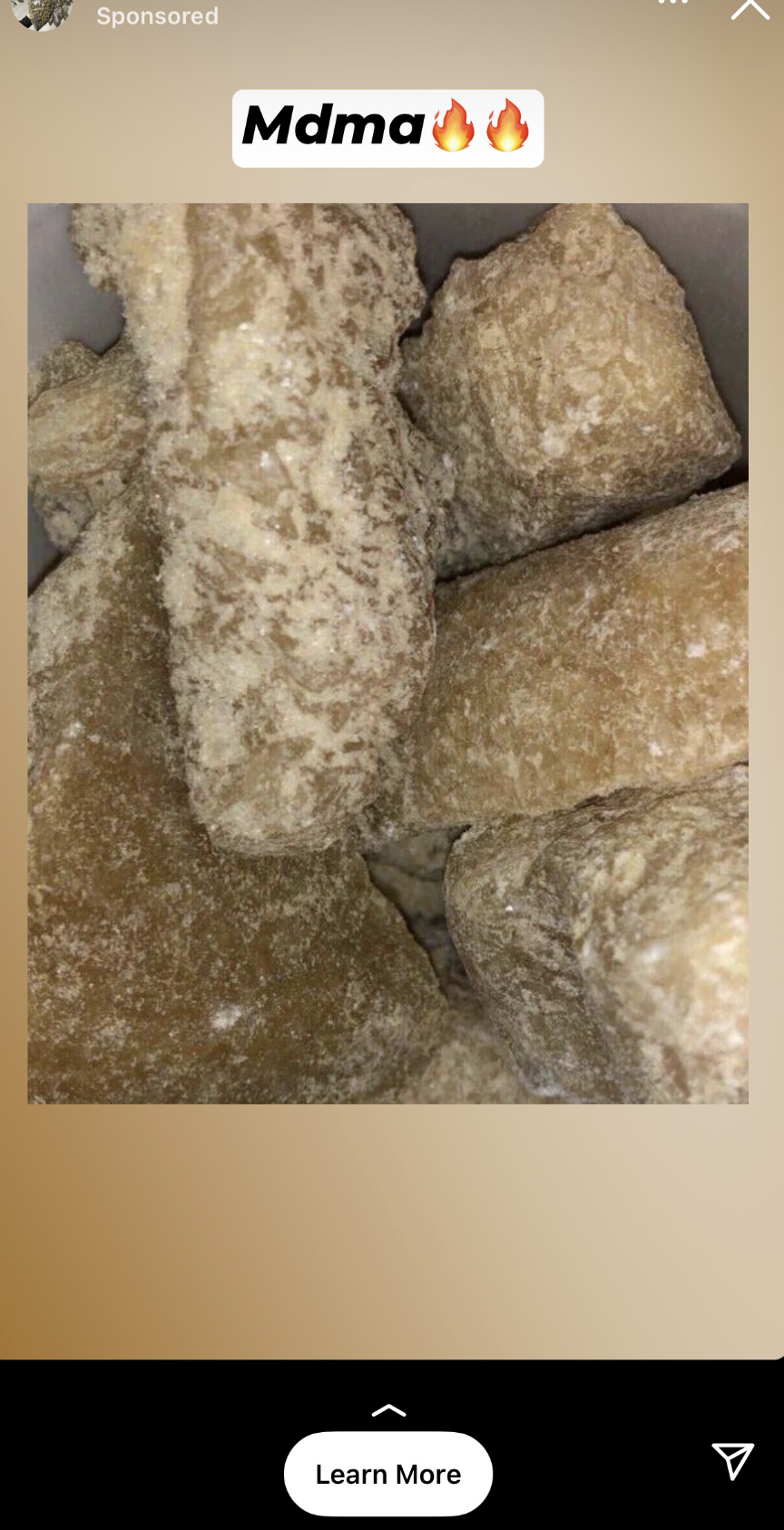

Instagram limited the reach of a 404 Media investigation into ads for drugs, guns, counterfeit money, hacked credit cards, and other illegal content on the platform within hours of us posting it. Instagram said it did this because the content, which was about Instagram’s content it failed to moderate on its own platform, didn’t follow its “Recommendation Guidelines.” Later that evening, while that post was being throttled, I got an ad for “MDMA,” and Meta’s ad library is still full of illegal content that can be found within seconds.

This means Meta continues to take money from people blatantly advertising drugs on the platform while limiting the reach of reporting about that content moderation failure. Instagram's Recommendation Guidelines limit the reach of posts that "promotes the use of certain regulated products such as tobacco or vaping products, adult products and services, or pharmaceutical drugs."

Meta reversed its decision on 404 Media’s post after we appealed it and deleted the words “guns, meth, pills, and weapons” from our caption, which was describing the types of content that Instagram is taking money for and injecting into users’ feeds.

As I reported earlier this week, most of these ads are for blatantly illegal products and services and link directly to Telegram accounts where drugs/guns/hacked accounts can be bought. A sampling of these ads can be found in seconds by searching for “t.me” on Meta’s Ad Library.

I have reported on content moderation for many years, and understand how difficult moderating content at scale can be. But the fact remains that the company has a massive, obvious problem with how it reviews and approves ads. An expert who studies content moderation told us that our investigation suggests Meta is not as sophisticated at reviewing and approving ads as it is at moderating normal posts on its platforms.

“You need to think about how much of an issue, historically, general content moderation has been,” Karan Lala of the Integrity Institute, made up of former integrity team workers at companies like Facebook told me. “The maturity of the different integrity teams is relevant to the kind of problems platforms have had in the past.”

In Meta’s case, its biggest ads-related issue has been with political ads. During the 2020 election campaign, it simply banned all political ads (months after the presidential election, it began allowing them again).

“With ads, a lot of the issue has been regulatory: Election ads spreading misinformation,” Lala said. “Ads for spam, ads for low quality content. Moderation of that seems a little less developed. In theory, these integrity teams should be feeding into the same systems. Ads should theoretically be going through a review process … a lot of these things are something that just should be getting caught [at the review process.]”

Meta did not respond to a request for comment. At the time of this writing, there are thousands of ads on Instagram and Facebook that link to Telegram channels and groups. Many of the most recent ones are for groups selling acid, MDMA, and hacked credit cards.